iStockphoto

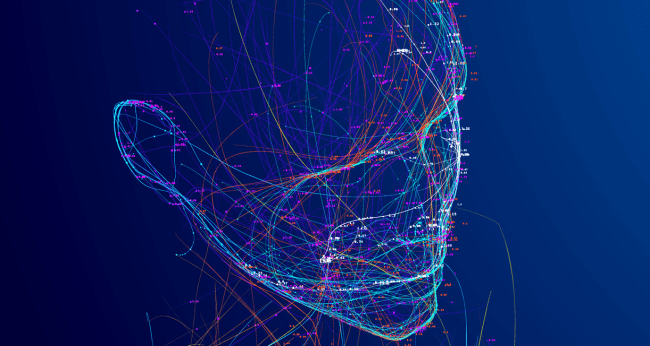

Mind reading artificial intelligence tools now have the ability to decode the words and sentences that people are thinking by utilizing brain scans.

This new ability was developed by scientists at the University of Texas at Austin. They did this despite one third of artificial intelligence scientists stating that they believe at some point in the future AI could cause a nuclear-level catastrophe. (Researchers at Oxford and Google Deepmind have also said AI will “likely” eliminate humanity.)

Of course, as always, the scientists claim to have altruistic reasons for doing such a thing.

New Scientist reports that Alexander Huth and his colleagues in the University of Texas at Austin Computer Science and Neuroscience Departments “developed a machine learning-driven AI model that can work out word sequences that match, or closely resemble, the input stimulus from people’s brain activity and the meaning behind them.”

They then used this brain decoder on people telling imagined stories and watching short silent films.

One person who was tested listened to a story that went “that night I went upstairs to what had been our bedroom and not knowing what else to do I turned out the lights and lay down on the floor.”

The AI translated the person’s brain patterns to be “we got back to my dorm room I had no idea where my bed was I just assumed I would sleep on it but instead I lay down on the floor.”

While that isn’t the exact thoughts the person had, it is pretty darn close, and the AI will only get better at it.

Scientists are basically developing an artificial intelligence brain decoder

“The fact that the decoder can get the gist of the sentences is very impressive,” says Anna Ivanova at the Massachusetts Institute of Technology. “We can see, however, that it still has a long way to go. The model guesses bits and pieces of meaning and then tries to put them together, but the overall message typically gets lost – likely because the captured brain signals reflect what concepts a person is thinking about, such as ‘talk’ or ‘food’ for example, but not how these concepts are related.”

There are two components to improving decoding models, says Jack Gallant at the University of California, Berkeley: better brain recordings and more powerful computational models. While fMRI capability hasn’t progressed much in the past decade, computational power and language models have.

“This work dials the computational model up to 11,” says Gallant. “They developed a fully modern, high-power, language neural network and used that as the basis for building the decoding model. This is really the innovation that is most responsible for producing such great results.”

The scientists claim they are teaching the artificial intelligence model to do this with the hope that some day they will be able to help people who can’t speak to communicate. They also claim it could be used to improve basic and clinical neuroscience and in research of mental health conditions.

Mo Gawdat, the former Chief Business Officer with Google’s Research and Development division, previously warned that many of the researchers who are developing artificial intelligence are “creating God.”

But no worries. Ivanova says, “If you didn’t listen to several hours of podcasts while lying in an MRI machine, Huth and his colleagues probably can’t decode your thoughts – at least not yet.”

On the other hand, Elon Musk, who called the commercialization of artificial intelligence “our biggest existential threat,” recently said that artificial intelligence will overtake humans within the next five years.

His timeline might be a little too optimistic. And it will all be our own fault.